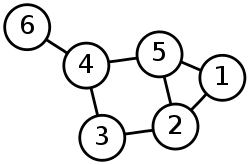

Problem Visualisation

Fro a computer, a graph can be represented as a dictionary of nodes and their neighbours, but this is hard for humans to understand and makes proble-solving much harder. An easier representation of a graph may look like this:

This makes problems involving a graph e.g. node traversal much easier to understand and work through.

Backtracking

You may not always have enough information to reach the solution, and this can be an issue in problems where there are multiple paths to the same solution, or different descisions branch off into more choices. Two examples of this are finding the exit in a maze, or getting a certain ending in a multiple-ending RPG e.g. The Stanley Parable.

Backtracking is a method of trying multiple different paths until you find one that works. DFS is one problem solving method that uses this. Note: this doesn;t find the shortest solution, only the first one that works.

Data mining

Big data refers to large data sets that cannot be easily handled or processed on a normal computer, and cannot be held in a traditional database. Data mining is the process of digging through such large datasets to find correlations and analyse the data. This is a new and popular field of study, being used in medicine, speech recognition, business and more.

Intractable problems

These are problems that can theoretically be solved, but would take a ridiculously long time to be solved, even with an algorithm.

One example is the Travelling Salesman Problem (TSP): What's the shortest route I can take to visit every town, no town more than once, and return where I started? By brute force, this would have a time complexity of O((n-1)) for n given towns, which is too much time.

Heuristics

Sometimes, you may not need a perfect answer to your problem, and an estimation or a range of values is enough. This can often be faster and / or easier than actually trying to solve your problem. For example, there may be a quicker route to school, but it's easier to take this path because it's a known route. This is an example of employing heuristics.

Performance modelling

An example of an application of heuristics. Where testing the actual performance of your system e.g. a network may be expensive, instead, mathematical approximations can be made to simulate your system and see how it handles e.g. a large user load and heavy traffic.

Pipelining

Pipelining is splitting a task up and processing these smaller tasks immediately one after another, allowing tasks to overlap e.g. in a microprocessor, while one task is being fetched, another is being decoded, and another is being executed.